Guest feedback is one of the most valuable data sources available to campground operators — and one of the most inconsistently used. Most campgrounds receive feedback through a combination of online reviews, direct complaints to front desk staff, and occasional emails. Very few have systematic processes for collecting feedback, analyzing it, and feeding it into operational improvement decisions.

Building a structured feedback system transforms scattered complaints and compliments into actionable operational intelligence.

The Feedback Collection Ecosystem

Guest feedback reaches campground operators through multiple channels, each with different characteristics:

Online reviews (Google, TripAdvisor, Campendium, The Dyrt): Public, permanent, seen by prospective guests. Typically received post-departure. Representative of guests who are either very satisfied or dissatisfied — moderate-experience guests often don’t review. The most consequential feedback channel for reputation management.

Direct surveys: Operator-controlled, can be highly structured, and typically capture a broader range of the guest population than self-selected reviewers. Can be deployed at check-out, via post-departure email, or in-stay via QR code.

In-person feedback: Comments made to staff, in-person complaints, and informal feedback during interactions. Hard to capture systematically; often filtered by staff before reaching management.

Social media: Tags, mentions, and DMs on Instagram, Facebook, and other platforms. Public (if not private messages), visible to brand followers, requires active monitoring to capture.

Maintenance and service request logs: Indirect feedback — the types of problems guests report reveal what’s not working on the property.

A mature feedback system collects from multiple channels, centralizes the data, and makes it analyzable.

Designing Guest Surveys

A well-designed guest survey captures actionable information without burdening guests with a tedious questionnaire.

Post-departure email survey (primary feedback tool): Sent 24–48 hours after checkout. The optimal length is 5–8 questions that can be completed in 3–4 minutes. Longer surveys see sharply reduced completion rates.

Essential survey questions:

- Net Promoter Score or overall satisfaction rating (1–10 or 1–5 stars)

- Satisfaction with specific touchpoints (check-in experience, site condition, bathhouse cleanliness, staff friendliness)

- What did you enjoy most about your stay? (open text)

- What would you suggest we improve? (open text)

- Would you return? (Yes/No/Maybe)

Touchpoint-specific questions: Rating specific operational elements (rather than just overall satisfaction) reveals which specific areas need improvement. A campground with an 8/10 overall rating might have a 6/10 for bathhouse cleanliness — identifying a specific operational gap that overall satisfaction scores would obscure.

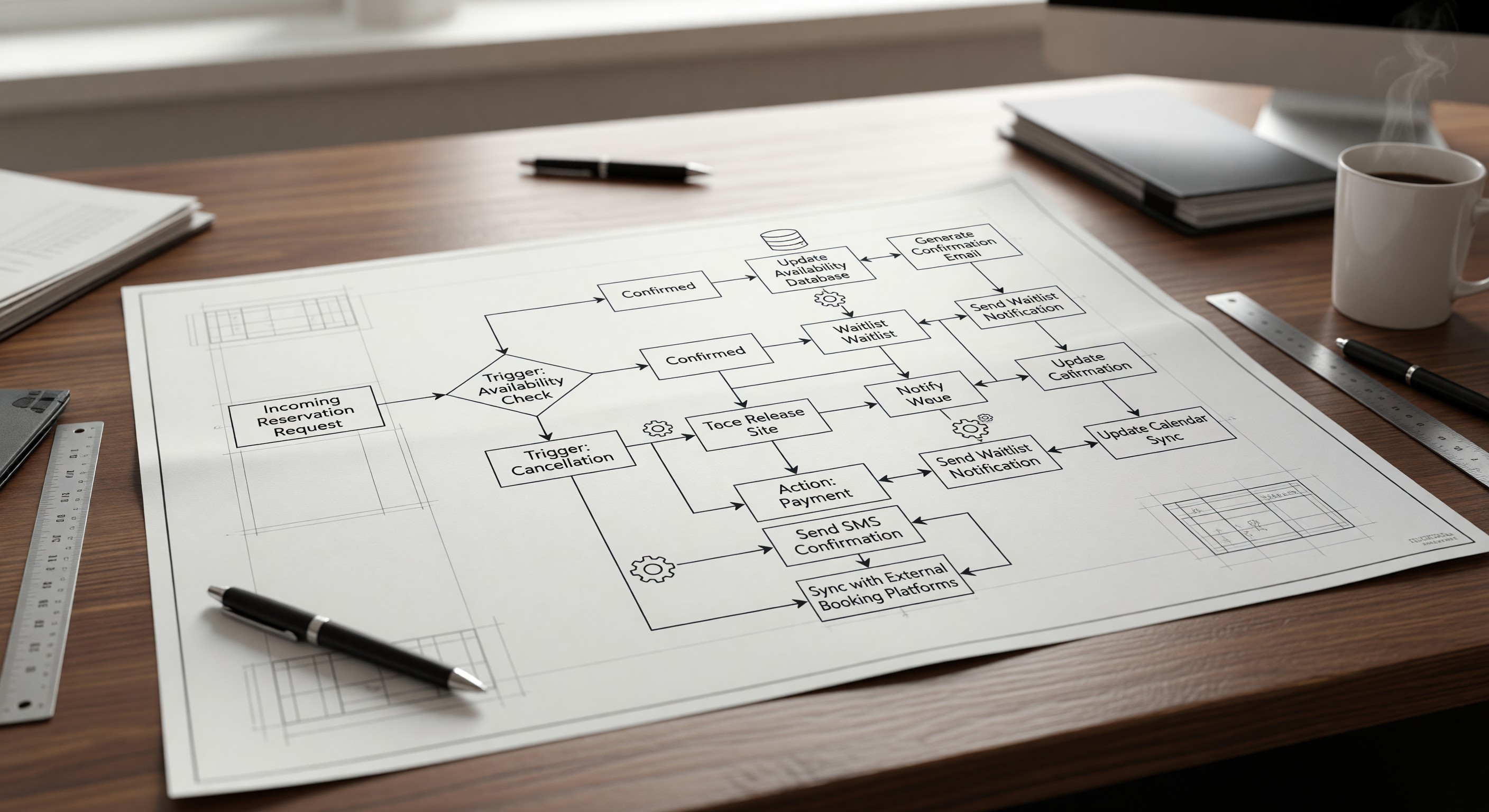

Survey tools: SurveyMonkey, Typeform, Google Forms, and Jotform all support guest feedback surveys. Integration with your reservation system — triggering the survey automatically at checkout — automates delivery without manual effort.

Analyzing Feedback for Operational Insights

Raw feedback data is only useful when analyzed for patterns. The volume of feedback a busy campground receives makes systematic analysis more practical than reviewing each response individually.

Sentiment scoring: Tools that automatically score text responses for positive or negative sentiment (many survey platforms include this) allow quick identification of responses requiring attention.

Category tagging: Manually or automatically tagging feedback by topic (cleanliness, noise, facilities, staff, value) allows analysis by category. A month-by-month view of cleanliness ratings reveals whether seasonal staffing affects cleaning quality. A comparison of site-type ratings reveals whether specific site sections are underperforming.

Trend analysis: Comparing average ratings month-over-month and year-over-year tracks whether operational improvements are moving satisfaction metrics. If you invested in new shower fixtures and bathhouse cleanliness scores improved the following season, that’s measurable validation.

Text analysis: Open-text responses to “what would you improve?” are the richest source of operational insight. Manually reviewing a sample of these responses — particularly from guests who gave low ratings — often reveals specific, actionable improvements that structured questions don’t capture.

Closing the Feedback Loop

The most important and most often missed element of a feedback system is closing the loop — demonstrating to guests that their feedback led to change.

Responding to reviews: Responding to all reviews (covered in the marketing article) is the most visible feedback loop closure. When a guest mentions a problem in their review and the manager’s response acknowledges it and describes what’s been done, prospective guests see that management is responsive.

Direct follow-up for significant complaints: Guests who report specific problems via survey — a broken fixture that ruined their shower experience, a noise complaint that wasn’t resolved — deserve a direct outreach acknowledging the problem and explaining the resolution. This is particularly important for guests who were otherwise satisfied but had one bad experience.

Season-start communication about improvements: A spring email to your past guest database that highlights specific improvements made based on their feedback — “You told us the pathways needed better lighting, and we’ve installed new LED lighting on the main path to the bathhouses” — demonstrates that feedback matters and makes guests feel heard.

Staff sharing of feedback: Sharing relevant guest feedback with the staff who can act on it creates the internal feedback loop that drives improvement. A front desk staff member who learns that guests consistently mention their helpfulness is motivated to maintain that behavior. A maintenance technician who sees that their work is mentioned positively in reviews understands the connection between their effort and guest outcomes.

Frequently Asked Questions

What survey completion rate should I expect? Post-departure email surveys typically achieve 15–35% completion rates, depending on how engaged your guest base is, how the email is designed, and how long after departure it’s sent. Rates are higher when the email is sent within 24 hours of departure, when the survey is clearly short, and when there’s a brief explanation of why feedback matters. Incentivizing completion (entry into a drawing) can increase rates but may attract lower-quality responses.

Should I use one survey tool or integrate with a review platform? For most campground operations, a single survey tool that captures internal feedback — not publicly posted — is the right starting point. Internal surveys capture more honest feedback (guests are less performative when not writing publicly), allow you to identify and address problems before they become public reviews, and provide data you fully control. Supplement this with active management of public review platforms.

How do I handle feedback that is clearly unfair or factually incorrect? Respond professionally on public platforms, acknowledging the feedback while gently clarifying what actually occurred if the facts are incorrect. Don’t argue, don’t be defensive, and don’t discount the feedback entirely — even unfair feedback often contains a kernel of truth about a guest experience that fell short of expectations. Internally, treat unfair feedback as a signal about how your campground is perceived even if the specific complaint isn’t accurate.

What’s the right frequency for reviewing feedback with the management team? Weekly during peak season — a brief look at the past week’s survey results and new reviews before the weekend rush. Monthly — a more detailed analysis of trend data and open-text responses. Annually — a comprehensive review of the full season’s feedback informing next year’s operational priorities. This cadence ensures that feedback drives continuous improvement rather than being filed and forgotten.